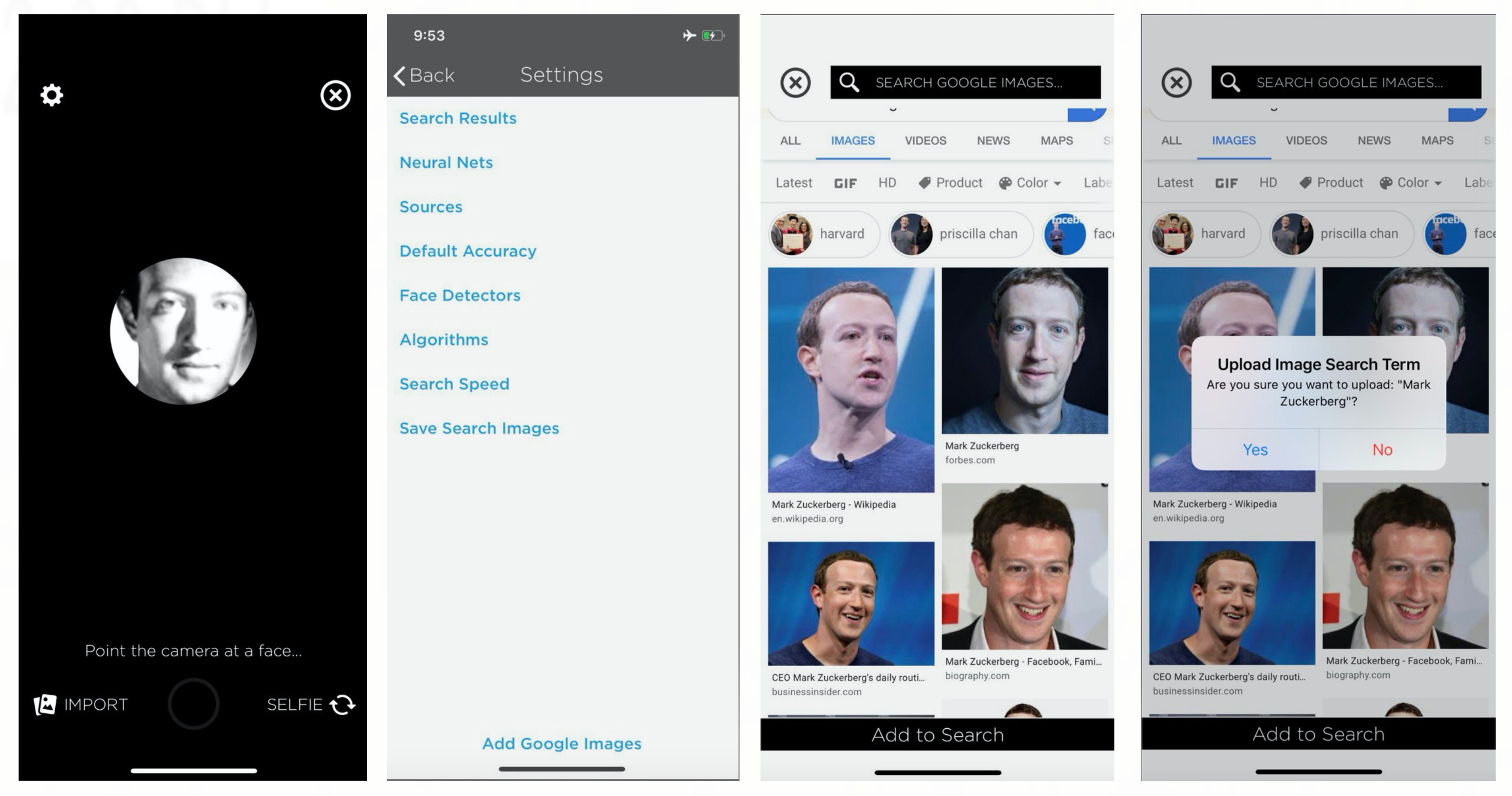

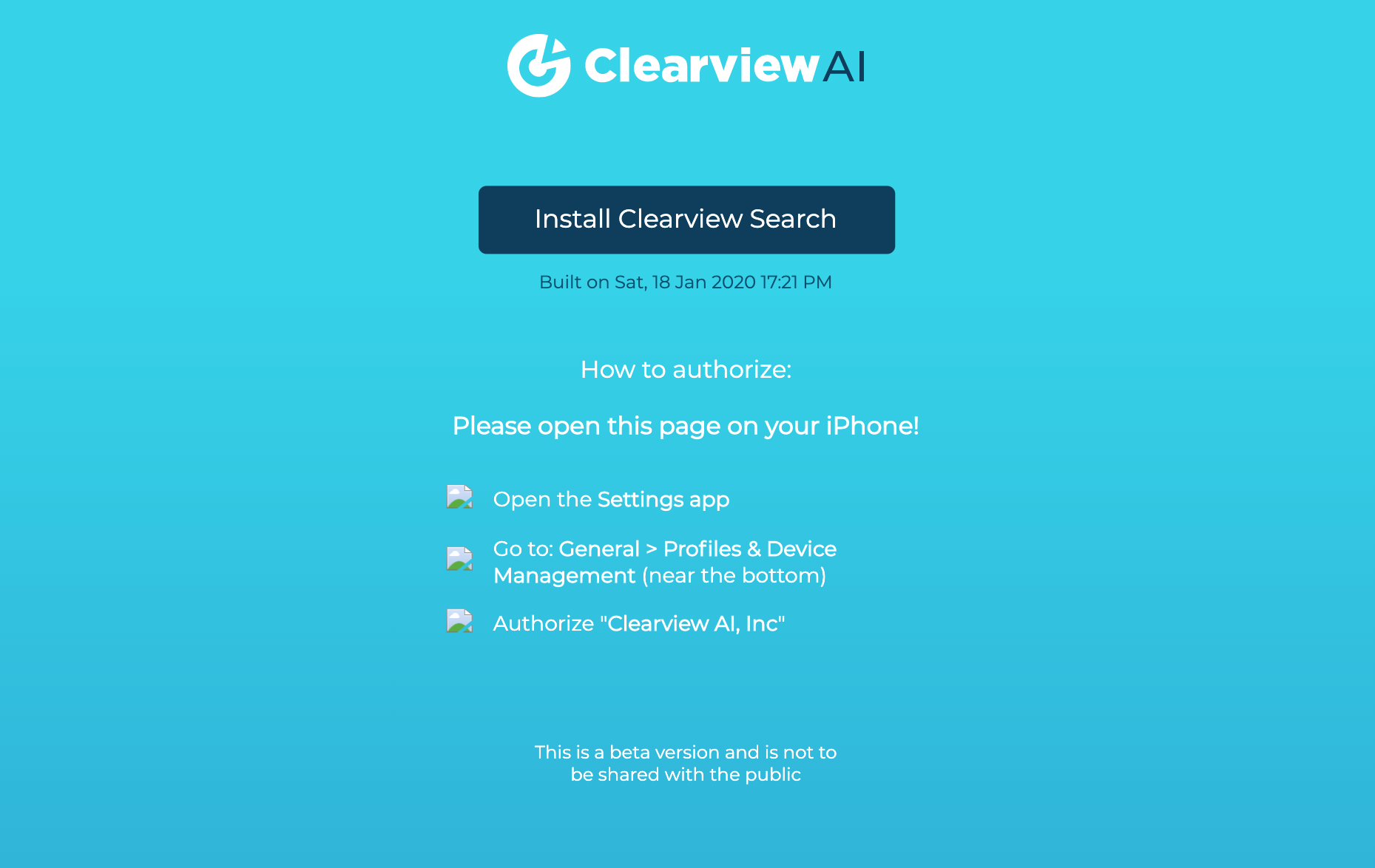

Finally, they undergo hashing for database indexing purposes.Įach image is enriched with other metadata, such as the link to the source from which it was extracted, geo-localization, gender, date of birth or nationality of the subject represented in the image. The images are elaborated in order to extract the identifying characteristics of each of them and, subsequently, transformed into vectorial representations which follow the different unique lines of a human face. In brief, the Clearview software collects in an automated way, through web scraping, images publicly accessible on the web (including social network, websites, blogs and videos) and stores them in its own database, where they are archived even if the original images are then removed or turned private. In particular, Clearview is a US company which developed highly qualified biometric search and face detection software, mostly addressed to specific categories of customers such as law enforcements or government authorities. The proceeding rose from a complex investigation conducted with the assistance of other European authorities and initiated ex officio against Clearview AI Inc. 5,6,9,12,13,14,15 and 27 of the EU General Data Protection Regulation. which, with order of February 10 th 2022, was fined 20 million euros for violating art. The OAIC is still finalising a report on the AFP’s trial of the technology and whether it complied with the federal privacy code for government agencies.The Italian Data Protection Authority (DPA) gave a very harsh response to the US company Clearview AI inc. However, the OAIC report found officers in Australia had successfully searched for suspects, victims and themselves in the Clearview databases. Police forces in Australia have downplayed their use of the service, and the OAIC’s investigation found Clearview did not have any paid customers in Australia. The OAIC report noted Clearview AI has not offered services to Australian organisations since March 2020, but the commissioner said that didn’t go far enough and all images of people in Australia must be removed. The company also said the images held by Clearview AI were published in the US, not Australia, so Australian privacy law should not apply.

In its response to the OAIC, Clearview AI argued its images were collected from the internet without requiring a password or other security clearance. The Australian founder and CEO of Clearview AI, Hoan Ton-That, said he respects the effort the commissioner put into evaluating the technology he built but said he is “disheartened by the misinterpretation of its value to society”. “Not only has the commissioner’s decision missed the mark on the manner of Clearview AI’s manner of operation, the commissioner lacks jurisdiction.” “Clearview AI operates legitimately according to the laws of its places of business,” he said. He said Clearview AI intends to appeal to the Administrative Appeals Tribunal. Mark Love, a lawyer for BAL Lawyers acting for Clearview AI, said the company had gone to “considerable lengths” to cooperate, and claimed “the commissioner has not correctly understood how Clearview AI conducts it business”. In response to the scandal, the Office of the Australian Information Commissioner (OAIC) launched an investigation in cooperation with the UK’s information commissioner office in July 2020. Reports suggested more than 2,000 law enforcement agencies across the globe had been using Clearview’s services in early 2020. Last year, it was revealed the US-based company had offered trial services to police in Australia – specifically Queensland police, Victoria police and the Australian federal police. “The indiscriminate scraping of people’s facial images, only a fraction of whom would ever be connected with law enforcement investigations, may adversely impact the personal freedoms of all Australians who perceive themselves to be under surveillance.”īut Clearview AI has stood its ground, saying it operates legitimately in Australia and intends to appeal the decision.Ĭlearview AI is a facial recognition service that claims to have built up enormous databases – containing more than 3bn labelled faces – through the controversial practice of scraping photos from Facebook and other social media sites. “When Australians use social media or professional networking sites, they don’t expect their facial images to be collected without their consent by a commercial entity to create biometric templates for completely unrelated identification purposes,” commissioner Angelene Falk said.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed